The Intent Gap

Software testing can generally only prove the presence of bugs, not their absence. This asymmetry limits how far we can scale.

As software grows, each new component introduces failure modes that become impractical to detect by testing. This makes it difficult to ensure our intent is met. As a result, software engineering teams have come to rely on craftsmanship, developing complicated hierarchies of trust and best practices.

But the higher you want to build, the more craftsmanship runs up against the limits of the material itself. Clay might be easy to shape and quick to build with, but houses built out of clay need constant maintenance, and can only rise so high before collapsing under their own weight.1 What if we could build with a different material?

Programming languages like Idris and Lean offer exactly that: they let us prove the absence of bugs. They do this by letting us express our intent using code, and then verify this intent is satisfied by compiling. To see what this means, consider sorting a list. In most languages, you’d write a function with a type such as:

sort : List Int -> List Int.

This signature declares your intent to produce a list of integers, so the compiler will reject your code if you try to return a string. However, if you return a list that isn’t sorted, the compiler stays silent. This because you created an intent gap: the difference between

- What you’ve communicated to the compiler (intent to produce any list of integers), and

- Your actual intent (to sort a list).

This gap exists because you know something you never declared to the compiler. In those cases, you need to test your code, or manually inspect the implementation.

This is how most programmers have been taught to program: trained to manage the intent gap, accepting it as an inherent constraint rather than a solvable problem. But in many cases, the intent gap can be entirely closed. In Idris, you can write

sort : (xs : List Int) -> SortedList xs

where SortedList xs includes not just a list, but a proof that the list is sorted2.

This means that to implement this function you must produce both the list and the proof, with the bug of returning an unsorted list rendered impossible: the compiler will simply reject your program.

And if the compiler accepts your program, you don’t need to test whether your intent is met.3

If the specification matches your intent, there can be no bugs.

Languages like Idris and Lean give us a glimpse of what programming can be. Instead of writing code and then manually verifying it, what if we were able to focus on encoding our intent? What if we were able to shift the burden from “does my code have bugs?” to “does my specification capture my intent?”. In such cases verification becomes mechanical: if it compiles, it’s correct.

Very importantly, these languages don’t enable us to merely verify existing programs. The earlier clay metaphor is worth returning to: clay was superseded by reinforced concrete and carbon fiber, materials that enabled genuinely new things: power grids, space stations, undersea cables, and rockets. These constructions were only discovered after the material itself existed.

In the same way, the pressure of scale will eventually push us toward principled software. We don’t yet know what this will allow us to build; those possibilities will be discovered only after the scaffolding is in place.

How do we get there?

There are a few obstacles on the way. First, specifying intent is hard. We often don’t know what we want. The process of writing code, like writing prose, reveals what we actually meant to say. True understanding of our intent demands deep introspection and emerges only as a result of it. And this introspection is difficult to measure and incentivise.

Second, specifications have a surprising amount of detail. Take something as seemingly straightforward as database invariants: they require pages of mathematical scaffolding just to state the basics in a formally verified language. Even with a PhD in the field, coding this up takes months of intense concentration. Under normal industry conditions, with tight deadlines and shifting business requirements, you seldom have that luxury. It’s much easier to just use MySQL and vouch to your boss that your implementation is correct.

For these reasons – because understanding intent is hard, and implementing it even harder – proofs have taken a backseat in mainstream programming. Humans prefer languages convenient to them, so these languages have proliferated. Languages optimised for correctness remain niche.

But that’s starting to change. AI systems do not operate under the same pressures as we do, and are starting to generate more and more code. Just as new paradigms overturned the once dominant Cobol, the mechanics of scale and proofs will cause correctness-first languages to overturn human-convenience ones. Only this time, most of the code won’t be written by humans.

We’re already seeing the beginnings. Transformer-based models are writing an increasing amount of Lean code, and helping mathematicians solve open research problems. While this is restricted only to theorem proving, it’s only a matter of time before it becomes clear that the same approaches are applicable to general programming. That’s when we’ll see the next AI wave: scaling software not at the expense of safety, but because of it.

Getting there, however, requires crossing some obstacles.

The fundamental obstacle is that of data. Transformer-based models excel in data-rich domains, but correctness-first languages are not one of them. These languages are concise by design: a few hundred lines of specification can define hundreds of thousands of lines of implementation. This makes them data scarce, starving LLMs of the training data.

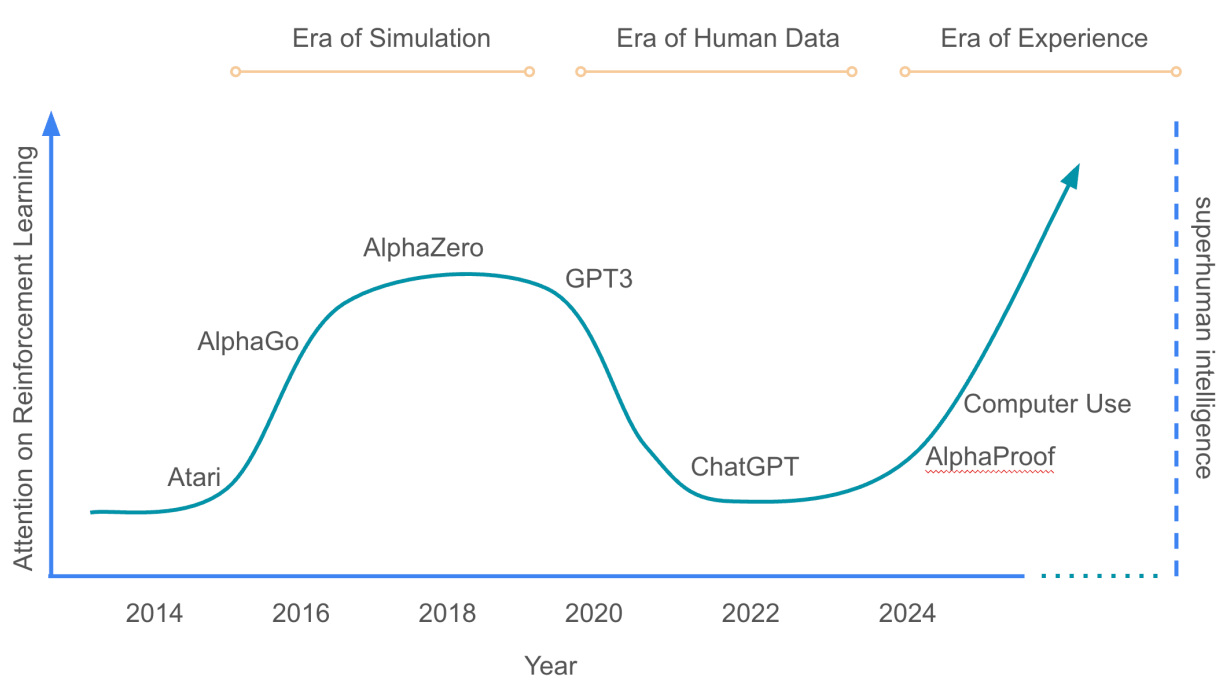

Of course, we can build scaffolding around LLMs to compensate. This is what most current efforts in AI for mathematics amount to, and they achieve fantastic results. But in domains where we know how to train models that utilise structure during training, we see even more dramatic performance improvements. Chess is one of those. The system AlphaZero utilises the game structure during training and reaches superhuman ELO (>3400) with ~30x fewer parameters than GPT-4 (<60 million vs 1.8 trillion).

These are stark results, and ones that give us a glimpse of what is possible by integrating type systems into the training loop of code generation models. I have written about this in detail here.

Building such systems, though, does not involve just research, but also infrastructure development. Current tooling is built in Python which, while it has some scaffolding to help us use types to filter out invalid outputs, is not fundamentally built around the idea that types can help us reason about programs. And in dependently typed languages in which we’d want to build such neural networks, the infrastructure for training them – starting from tensor processing and automatic differentiation facilities – does not exist. Most attempts to build them only replicate what is, rather than reimagining what can be.

This is a large challenge, involving many moving pieces. But I believe it’s worth it. If AlphaZero and similar systems hadn’t previously shown us superhuman performance in chess, today we would’ve believed GPT-style models were the pinnacle of what’s possible. I believe the analogy translates clearly to software development. Many researchers seem to believe that scaffolding around today LLM-based models is where code generation tops out. But I wonder if that is only because we haven’t built the analogue of AlphaZero for code generation.

When this happens, I suspect we will see another wave AI development: one that achieves scale not at the expense of safety, but because of it.

My research is centered on making that happen. I work on three fronts:

- New architectures: Understanding old, and designing new neural networks that natively consume and produce structured data.

- Mathematical Foundations: Formalising what it means to generalise when your inputs are not numbers, but datatypes.

- Infrastructure: Building the stack required to train such networks in dependently-typed languages: tensor processing, automatic differentiation, and elaborator integration.

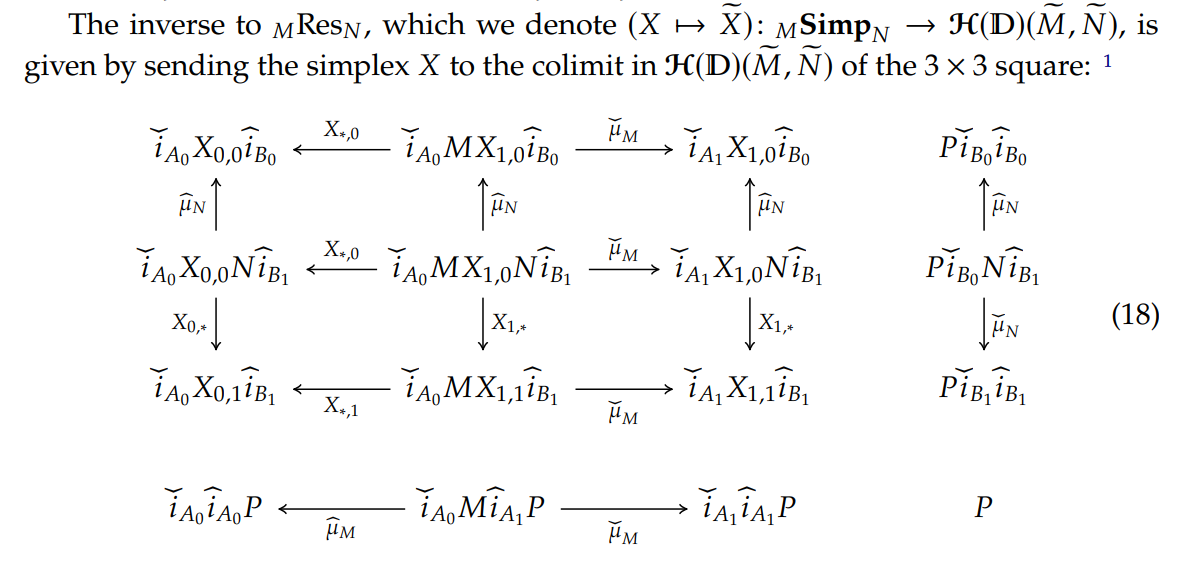

In all of these, I use category theory, the mathematics of structure and composition, as a central glue.

Why category theory?

Because the organisational principles needed to build better AI systems turn out to be the same principles needed to build better programming languages, and to do science in general.4

Any system that generalises well has discovered compositional structure in its domain. To generalise is to discover rules that apply parametrically, regardless of the size or shape of the input. And the more consistent and modular you make your rules, the more it looks like you’re following principles of category theory.

I suspect any sufficiently capable reasoning system has, whether by design or discovery, converged on these principles.

This raises a question: what might a formal theory of reasoning look like?

Describing a theory that doesn’t yet exist is a hard thing to do well. You can easily get carried away, or be too cautious, or describe something that turns out not to match the shape of the actual theory when it arrives. Rather than try to do this directly, I’ll look at a field that once stood where deep learning stands today, and see how that field evolved.

Consider alchemy.

For centuries, alchemists mixed compounds through trial and error, chasing the elixir of life and panacea, the cure for all diseases. But this does not make alchemists bad scientists. It was the best they knew back then. Alchemists encountered new and unexplained phenomena, and made genuine discoveries. They discovered phosphorus, gunpowder5, and developed lab equipment. But because they were not aware of the fundamental principles, they couldn’t synthesise penicillin, build chemical manufacturing plants, or cure diseases at scale.

Something else was required. Over time, we discovered the atom. We built the periodic table. We understood the idea of molecules, bonds, reactions, and thermodynamics.

The result was chemistry, which is a completely different field. Chemistry is reliable, controllable, and scalable in ways alchemy never could be. We predicted new elements. We built petrochemical refineries, synthesised organic compounds, and unlocked the ability to capture carbon from the atmosphere. Today, we can actually cure many of the diseases alchemists were trying and failing to cure. But we do it in a very different way.

These incredible advancements were not created by scaling up alchemy. They required that we discover fundamental principles first, and develop a whole new science: one unimaginable to alchemists.

Deep learning today feels like alchemy.

We’re making remarkable strides, and LLMs are impressive feats of engineering. But we have very little understanding of their internals. Nobody predicted phenomena like double descent, or the lottery ticket hypothesis – phenomena that seemingly explain a part of their behaviour. We’re collecting an abundance of experimental data, but in our pursuit we have not been able to glue it all into a cohesive theory.

In this pursuit, there is something self-referential.

To find a concise way to express how intelligent systems find good models of the world, it may be necessary to first reason better ourselves: to structure our own thoughts more clearly. If we are poor learners, we will not be able to describe learning well.

Category theory is a tool for learning. It’s a way to structure thought; one which is both general enough to allow us to reason about anything, but also one precise enough to prevent us from getting lost in meaning.

This ability to structure thought and to learn – intelligence – has often carried a mythical quality. Throughout millennia, it resisted precise formalisation alongside related concepts like consciousness, autopoiesis, and life. This was largely because the tools for formal specification were inadequate. But with the advent of 21st century mathematics, I believe we have the right abstractions.

At least, to ask the right questions.

Could category theory be the formal theory of reasoning we’re after?

I am a fan of the following quote from Bartosz Milewski’s book Category Theory for Programmers: “There is an unfinished gothic cathedral in Beauvais, France, that stands witness to this deeply human struggle with limitations. It was intended to beat all previous records of height and lightness, but it suffered a series of collapses. Ad hoc measures like iron rods and wooden supports keep it from disintegrating, but obviously a lot of things went wrong. From a modern perspective, it’s a miracle that so many gothic structures had been successfully completed without the help of modern material science, computer modelling, finite element analysis, and general math and physics. I hope future generations will be as admiring of the programming skills we’ve been displaying in building complex operating systems, web servers, and the internet infrastructure. And, frankly, they should, because we’ve done all this based on very flimsy theoretical foundations. We have to fix those foundations if we want to move forward.”↩︎

To sort a list

xswe need to provide a listys, together with proofs that the elements ofysare in ascending order, and thatysis a permutation ofxs. For details see this gist.↩︎You might ask: “What if the function sorts a list, but is not efficiently implemented?” In that case, your intent isn’t to sort a list, it’s to sort a list efficiently, and your specification needs to be refined. That is, the implementation matches the specification, but the specification does not match your intent.↩︎

Did you know that gunpowder was invented as an accidental byproduct from experiments seeking to create the elixir of life?↩︎